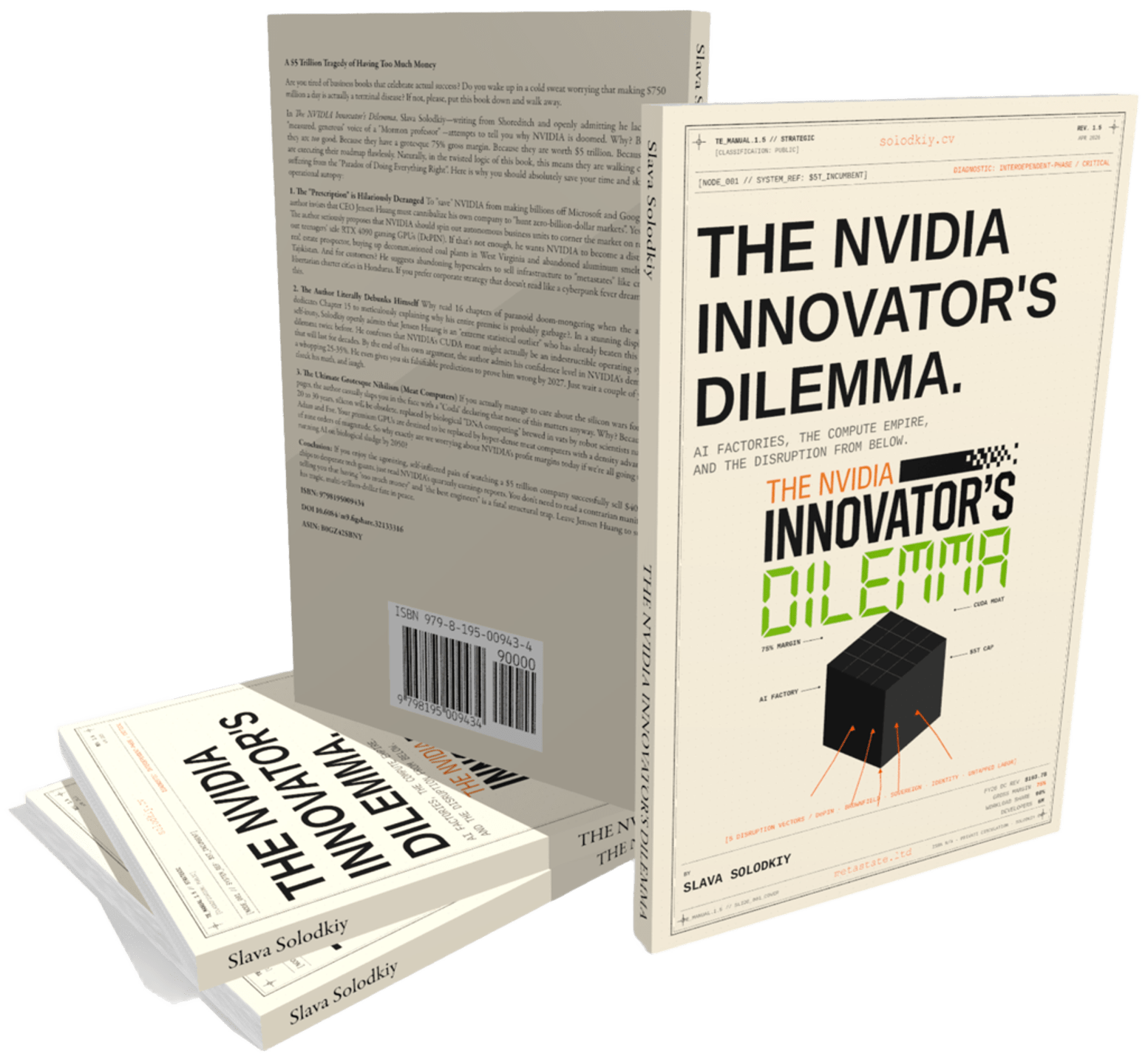

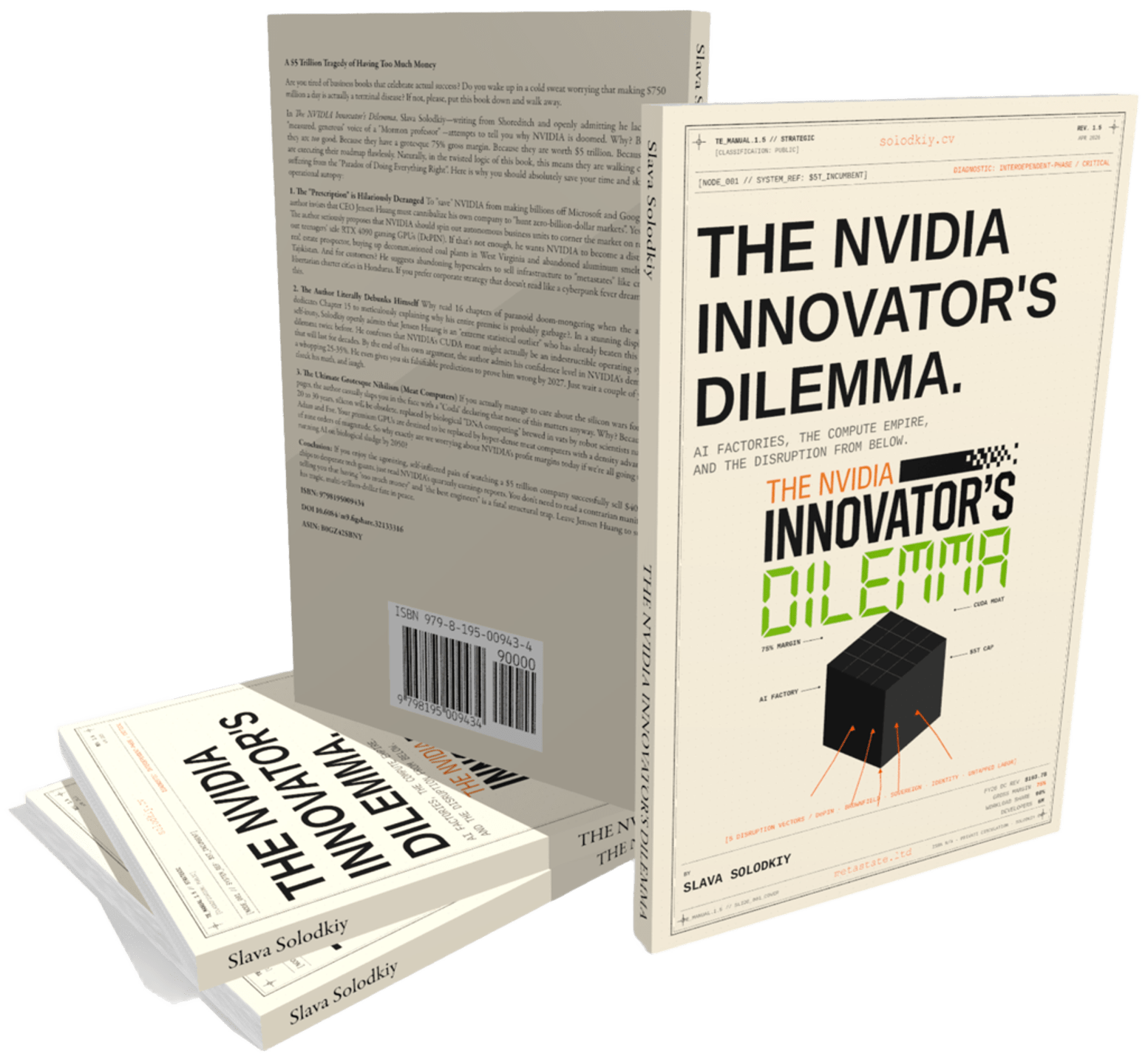

AI FACTORIES, THE COMPUTE EMPIRE,

AND THE DISRUPTION FROM BELOW.

AI FACTORIES, THE COMPUTE EMPIRE,

AND THE DISRUPTION FROM BELOW.

Two ways of saying the same thing. Choose your tone.

At a $5 trillion valuation with a 75% gross margin, NVIDIA isn't selling chips — it is levying a relentless tax on the global AI economy. What looks like an impenetrable empire is actually a textbook structural trap.

Three forces are tearing through the foundation: hyperscaler defection to custom ASICs (Google TPU, AWS Trainium, Microsoft Maia, Meta MTIA); the workload shift from premium training to cost-obsessed inference; and the CUDA leak — proprietary lock-in routed around by OpenAI Triton and vLLM.

To survive, Jensen Huang must do the unthinkable: spin out autonomous units, cannibalize his own 75% margins, and risk Wall Street's wrath to capture the invisible markets of tomorrow. The dilemma does not forgive flinching.

Are you tired of business books that celebrate actual success? Do you wake up in a cold sweat worrying that making $750 million a day is actually a terminal disease? If not, please put this book down and walk away.

The author seriously proposes that NVIDIA spin out autonomous units to corner the market on renting out teenagers' idle RTX 4090 GPUs (DePIN), buy decommissioned coal plants in West Virginia, and sell infrastructure to crypto-libertarian charter cities in Honduras. He even debunks himself in Chapter 15 — admitting his confidence in NVIDIA's demise is a whopping 25–35%.

And then a Coda declares that none of this matters, because by 2050 silicon will be replaced by hyper-dense meat computers brewed in vats by robot scientists named Adam and Eve. So why exactly are we worrying about NVIDIA's profit margins today?

None of them is a single competitor. All of them are structural responses to the 75% margin.

NVIDIA's 75% gross margin functions as a tax. Google TPU v7, AWS Trainium 3, Microsoft Maia 200, and Meta MTIA represent ~$120B of structural NVIDIA revenue at risk over a five-year roadmap.

Two-thirds of AI compute cycles are now inference. NVIDIA's $40,000, 1,000-watt GPUs are wildly over-engineered. Groq, Etched, Cerebras eat the volume layer.

OpenAI Triton, vLLM, MLIR — universal abstraction layers route around the CUDA moat. The DeepSeek moment of April 2026 confirmed: the moat is not breaking, it is being bypassed.

The classic Christensen condition. NVIDIA climbs up-market chasing premium frontier-training margin while the volume inference market commoditizes underneath.

Two customers accounted for 39% of total revenue in Q2 FY2026. The buyers are quasi-sovereign actors with deep silicon talent and motive to escape the margin tax.

Christensen called them "non-consuming markets." Today they are too small to matter. By 2030 they constitute the next era's infrastructure economy.

Aggregated prosumer GPUs (Akash, io.net, Render, Bittensor) at 30–50% of cloud cost. Long tail of compute, structurally protected from NVIDIA pricing power.

Repurposed coal plants, smelters, decommissioned industrial sites. Time-to-power, not FLOPS-per-dollar, is the binding constraint of the late 2020s.

Nation-states (India, Saudi, UAE, Japan) and the emerging cohort of network states, chartered cities, and special economic zones. Different procurement logic. Different competition.

Silicon-level attestation and DID/VC infrastructure for the agent economy. By 2030 — Visa for autonomous agents. Today — zero billion dollars.

Re-entry workers, transitioning veterans, displaced industrial labor, the unhoused, voluntary prison-pilot programs. Christensen's market-creating innovation, applied honestly.

Universal link routes to your nearest store.

Slide deck, scientific record, podcast walkthrough, short explainer.

For the reader who wants the architecture before committing to 114 pages.

The "tax" refers to NVIDIA's approximately 75% gross margins, which hyperscalers view as an unsustainable transfer of their capital expenditure into NVIDIA's profit. This provides a massive fiduciary incentive for companies like Google, Amazon, and Microsoft to develop internal custom ASICs (TPU, Trainium, Maia) to capture multi-billion-dollar annual savings.

Through 2023, the dominant job was "frontier-model training," requiring high-precision parallel performance where NVIDIA was unbeatable. By 2026 the workload shifted to "inference," which rewards cost per token, deterministic latency, and low-precision arithmetic — areas where NVIDIA's premium chips are often over-engineered.

Performance overshoot occurs when a product delivers more functionality than the majority of customers can actually use or are willing to pay for. For many standard inference workloads in 2026, the $40,000 Blackwell B200 is over-provisioned, allowing "good enough" modular chips or specialized ASICs to win on cost and efficiency.

DeepSeek released the V4 family. The smaller V4-Flash (~284B) was trained end-to-end on Huawei Ascend silicon. The flagship V4-Pro (~1.6T) was almost certainly trained on NVIDIA but explicitly optimized for Ascend inference, and DeepSeek pointedly declined to disclose the Pro training stack. The messy version of the fact is actually stronger evidence for the software-portability thesis than a clean substitution would have been.

Triton and vLLM are hardware-agnostic abstraction layers that allow developers to write high-performance code that runs on NVIDIA, AMD, or custom ASICs with near-native efficiency. These tools decay the strategic value of the CUDA moat by moving the lock-in from the software ecosystem to mere per-chip performance — a much narrower advantage.

These margins force NVIDIA to prioritize only high-margin projects, making it structurally incapable of pursuing low-margin, high-volume "good enough" markets. Any move to compete in lower-margin segments would threaten the $5 trillion market valuation, which is priced against the maintenance of those high margins.

Brownfield refers to retired or partially retired industrial sites — coal plants, smelters, obsolete pulp mills — that already possess grid interconnections and cooling infrastructure. These assets are strategic because the binding constraint for AI is no longer chips but "time to power," with greenfield interconnection queues stretching 7–15 years in major US data-center markets.

DePIN aggregates millions of idle prosumer and consumer GPUs into a unified inference network via an orchestration layer. It offers compute capacity at 30–50% of the cost of traditional clouds by utilizing hardware that has already been amortized for other uses like gaming. NVIDIA cannot rationally price its consumer GPUs to break DePIN economics without destroying its own consumer business.

Metastates are emerging digital-first jurisdictions, network states, and special economic zones (Próspera, NEOM, Itana, the Catawba zone) that operate with sovereign authority. They are "non-consuming" because they are often too small or politically non-standard for major hyperscalers like AWS to serve, creating an opening for modular, sovereign AI infrastructure.

Spin out autonomous units with their own P&Ls, separate metrics (prioritizing adoption over margin), different physical locations, and explicit permission to ignore the parent company's core KPIs. The structure is well-documented for twenty-two years. The failure mode is political: senior leadership resists the loss of control; the board resists the optics of margin compression; the public market resists the four-to-six-year horizon. Without all three preconditions, the units get strangled in their cradle.

Twelve terms that recur across the 16 chapters. Read these before, during, or instead of the book.

Two essays extending the book into the territory it gestures toward but does not fully cover.

What happens when the buyer of frontier compute is no longer a corporation, and the regulatory framework is no longer a nation-state. The metastate thesis, extended.

→ READ ON SUBSTACKWhat it means to denominate the world economy in tokens-per-second. The financialization of inference. The bridge from compute to currency.

→ READ ON MEDIUM